This article was written by Tomasz Malisiewicz.

You might go to a cutting-edge machine learning research conference like NIPS hoping to find some mathematical insight that will help you take your deep learning system’s performance to the next level. Unfortunately, as Andrew Ng reiterated to a live crowd of 1,000+ attendees this past Monday, there is no secret AI equation that will let you escape your machine learning woes. All you need is some rigor, and much of what Ng covered is his remarkable NIPS 2016 presentation titled “The Nuts and Bolts of Building Applications using Deep Learning” is not rocket science. Today we’ll dissect the lecture and Ng’s key takeaways. Let’s begin.

Figure 1. Andrew Ng delivers a powerful message at NIPS 2016.

Andrew Ng and the Lecture:

.

Andrew Ng’s lecture at NIPS 2016 in Barcelona was phenomenal — truly one of the best presentations I have seen in a long time. In a juxtaposition of two influential presentation styles, the CEO-style and the Professor-style, Andrew Ng mesmerized the audience for two hours. Andrew Ng’s wisdom from managing large scale AI projects at Baidu, Google, and Stanford really shows. In his talk, Ng spoke to the audience and discussed one of they key challenges facing most of the NIPS audience — how do you make your deep learning systems better? Rather than showing off new research findings from his cutting-edge projects, Andrew Ng presented a simple recipe for analyzing and debugging today’s large scale systems. With no need for equations, a handful of diagrams, and several checklists, Andrew Ng delivered a two-whiteboards-in-front-of-a-video-camera lecture, something you would expect at a group research meeting. However, Ng made sure to not delve into Research-y areas, likely to make your brain fire on all cylinders, but making you and your company very little dollars in the foreseeable future.

.

Money-making deep learning vs Idea-generating deep learning:

.

Andrew Ng highlighted the fact that while NIPS is a research conference, many of the newly generated ideas are simply ideas, not yet battle-tested vehicles for converting mathematical acumen into dollars. The bread and butter of money-making deep learning is supervised learning with recurrent neural networks such as LSTMs in second place. Research areas such as Generative Adversarial Networks (GANs), Deep Reinforcement Learning (Deep RL), and just about anything branding itself as unsupervised learning, are simply Research, with a capital R. These ideas are likely to influence the next 10 years of Deep Learning research, so it is wise to focus on publishing and tinkering if you really love such open-ended Research endeavours. Applied deep learning research is much more about taming your problem (understanding the inputs and outputs), casting the problem as a supervised learning problem, and hammering it with ample data and ample experiments.

.

“It takes surprisingly long time to grok bias and variance deeply, but people that understand bias and variance deeply are often able to drive very rapid progress.” –Andrew Ng

.

The 5-step method of building better systems:

.

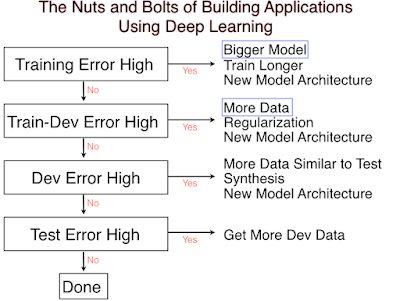

Most issues in applied deep learning come from a training-data / testing-data mismatch. In some scenarios this issue just doesn’t come up, but you’d be surprised how often applied machine learning projects use training data (which is easy to collect and annotate) that is different from the target application. Andrew Ng’s discussion is centered around the basic idea of bias-variance tradeoff. You want a classifier with a good ability to fit the data (low bias is good) that also generalizes to unseen examples (low variance is good). Too often, applied machine learning projects running as scale forget this critical dichotomy. Here are the four numbers you should always report:

- Training set error

- Testing set error

- Dev (aka Validation) set error

- Train-Dev (aka Train-Val) set error

Andrew Ng suggests following the following recipe:

Figure 2. Andrew Ng’s “Applied Bias-Variance for Deep Learning Flowchart”

for building better deep learning systems.

To read the full original article click here. For more deep learning related articles on DSC click here.

DSC Resources

Popular Articles